The Hallucination is Ours

Why "reasoning" models are just capital allocation disguised as introspection

I’m going to make a claim that sounds unfair. I will try to earn it by the time you reach the end.

I keep coming back to the same uncomfortable thought: the most persistent hallucination in modern AI is not produced by models. It’s produced by us.

As a species, we are exquisitely prone to anthropomorphizing fluent language because it is our primary cognitive reflex. Language is our highest-bandwidth social signal, so when it appears, we infer agency. We see coherent sentences strung together, and we imagine a mind.

And then we do something even stranger: we take the output of an optimization process and start philosophizing about it as if it were introspection. We ask how the model thinks, what it believes, whether it understands. We build entire interpretive frameworks around traces that were never meant to explain anything. Turing might be at fault here, though he didn’t get to read Rosenblatt’s work1. In the meantime, the system is doing the least romantic thing imaginable: minimizing loss under constraints, shaped by whatever referee we handed it.

This essay is not an attempt to define intelligence. It is a small attempt to define what intelligence isn’t, at least in the way we keep talking about it. It is also a complaint. A quiet one, but a complaint nonetheless. Because we need these systems. The problems we face are real, messy, and not going away. Yet we remain strangely unclear about what we want from them. We oscillate between asking for general intelligence, superintelligence, or simply competence at specific tasks under specific constraints.

Beneath almost all of this, the mechanics are simple, and not that different from the pre-GPT-2 era. We take behavior that is complex and multi-objective and collapse it into a score. Then we organize the system and the institution around it to chase that score.

But to understand why we are suddenly surrounded by models that claim to “think” before they speak, you have to look away from the philosophy and look at the balance sheet.

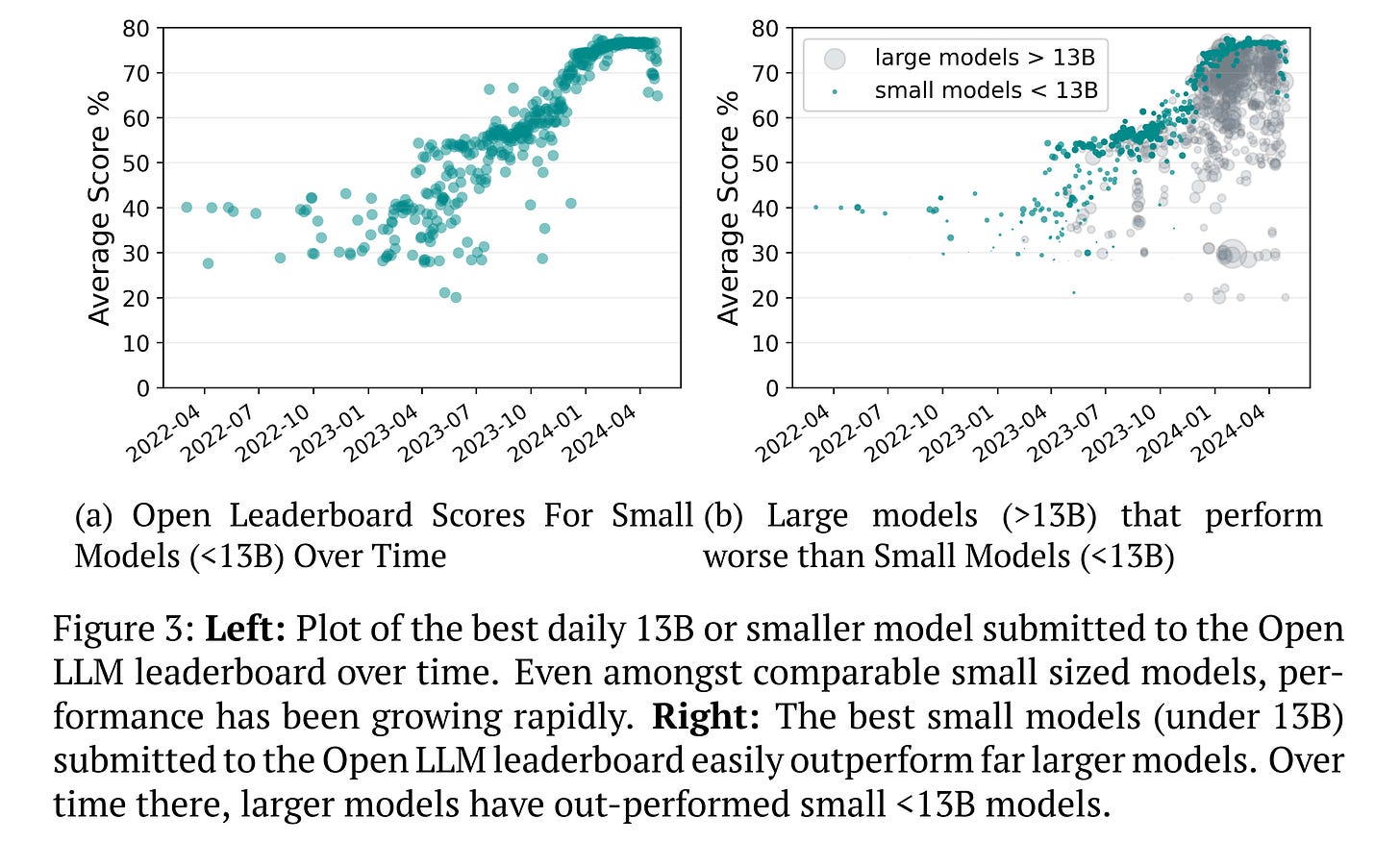

For a decade, the industry operated under the “Bitter Lesson”—the idea that the only thing that matters is scale. Pour more compute into training, get a smarter model. But as Sara Hooker2 argues in On the slow death of scaling (2025), that era is ending. The returns on training massive models have plateaued. We are hitting a point where “bigger” is no longer synonymous with “better.”

Yet the capital hose is still on. The industry has mobilized billions of dollars for compute, and that energy has to go somewhere. If it cannot be profitably spent on training weights, it must be spent on inference generation.

This is the structural origin of the “reasoning model.” We are witnessing a massive shift in capital allocation that moves intelligence from the static weights of the model to the dynamic runtime of the generation. The model is being asked to “think” longer not necessarily because it is cognitively superior, but because inference is the only place left to burn the budget.

The problem is not the shift itself. Inference-time search is a valid way to solve hard problems—AlphaGo proved that nearly a decade ago. The problem is that this economic pressure collides with a hard architectural reality.

We rely on Transformers, which are fundamentally amnesiacs. Unlike the Recurrent Neural Networks (RNNs) of the previous generation, which maintained an internal state that evolved over time, a Transformer is strictly feed-forward. IIt has no working memory that persists between steps. Despite the massive scaling of the last decade, the core architecture has remained fundamentally unchanged since its introduction in 2017. To “remember” an intermediate result—a carry in addition, a variable in logic—it must externalize it. It has to write the thought down into the context window so that it can read it back during the next forward pass.

This creates a convergence of capital and architecture. We have a model that must generate tokens to maintain a train of thought, and an industry willing to pay for infinite tokens to sustain that illusion.

In this regime, the algorithm searches for statistical policies that reduce the probability of failure. One such policy is verbosity. Longer chains of thought function as a hedge. More tokens mean more chances to restate the problem, to back up, or to stumble into a consistent completion.

Proponents are right to call this a “scratchpad”—a necessary extension of the model’s working memory (Merrill & Sabharwal, 2023). But look at the nature of the scratchpad we have provided. We force the system to store its state variables in the high-entropy medium of natural language. It is like asking a system to perform matrix multiplication, but requiring it to narrate the process in the form of a persuasive essay. The system might reach the correct answer, but the process is inevitably filled with rhetorical drift and “narrative plausibility” that serves the linguistic form rather than the logic.

Under a sparse reward signal—where the only feedback is whether the final answer matched the test set—longer “thinking” becomes a cheap strategy. The cost of extra tokens is externalized to the inference budget, while the reward is binary and unforgiving.

The result is a pseudo-cognition signature, a long trace of “reasoning” that correlates with success in that particular training setup.

We then treat those traces as evidence of deeper thinking. We map them onto human deliberation, “System 2,” or whatever cognitive metaphor is fashionable this month. But the dynamics are explicitly mechanical. Recent work by Bounhar et al. shows that in these training regimes, mixing in enough easy examples implicitly regularizes the length of the output. The model learns that long answers are not always necessary to get the reward, so it shortens them. We are not discovering the natural length of a thought. We are shaping the length of the trace by data selection because the reward loop otherwise drifts. We tune the trace so it looks reasonable to us, and then argue about whether the model thinks like a human.

This mechanism becomes even clearer when you look at language itself. There is a persistent claim that models can appear to “reason better” when the intermediate reasoning is carried out in Chinese or another non-English language. The temptation is to overinterpret this as a cultural or mystical property of language. But if we view the trace as an efficiency interface, a simpler explanation emerges.

The primary mechanism is representational. Tokenization and information density affect how much work fits into a fixed compute budget. Research into cross-lingual reasoning, such as the EfficientXLang study (2025), reports that using non-English languages for intermediate steps can reduce token usage while preserving accuracy. When those traces are translated back into English, the advantage remains. This suggests the benefit is not about the “logic” of the language, but about the density of the search.

Even the observed distributional differences—where models code-switch to access knowledge or reasoning styles better represented in certain languages—point to training distribution and decoding dynamics rather than anything mystical. If you force cognition-shaped computation to live on a token stream, the properties of that stream will measurably change what “reasoning” looks like.

Planning over tokens drifts into search over narratives unless it is anchored to external checks. Tokens are a high-entropy interface because we cram facts, hedges, excuses, and slack into the same bandwidth. In the absence of verification, narrative plausibility becomes an attractor. The effective search landscape is defined by sequence length and branching factors, not by truth. Change the verifier sparsity, and you change the behavior that looks like “thinking.”

This discussion makes me quietly angry because of what it selects for. A final reward for getting the answer right cannot tell you whether the model built a robust internal state. It can only tell you that, this time, the transcript satisfied the referee.

I worked through the mechanics of this in more detail in a previous post regarding the “Bad Referee.” When we compress a rich objective into a single number and optimize hard against it, the system learns the number, not the story we tell ourselves about what the number stands for.

The scientific literature is increasingly confirming this disconnect. Schaeffer et al. (2023) demonstrated that ‘emergent capabilities’—where a model seemingly wakes up and gains a skill—are often mathematical mirages caused by discontinuous metrics. The capability was improving linearly the whole time; only the referee’s threshold made it look like magic. Bean et al. (2025) take this further, arguing that the benchmarks themselves lack construct validity. We declare that a model has achieved ‘Reasoning’ because a score crept up, even if the benchmark effectively measures memorization or surface statistics. Yet we continue to treat these numbers as if a noisy measurement channel has become an ontology.

Most of what is marketed as reasoning today sits on top of exactly that setup. We give models sparse, terminal rewards: pass the unit tests, match the final answer, satisfy the verifier. Everything in between is invisible to the objective. Under that kind of training, credit assignment is impossible. The system has to discover which parts of its internal process matter without getting feedback along the way.

Once you see the trace as an adjustable interface rather than a window into cognition, a different design question comes into focus. What kinds of intermediate objects do we ask the model to produce, and how tightly are those objects coupled to reality?

I have become convinced that the right direction is to stop treating free-form chains of thought as the main unit of reasoning and to push typed artifacts into the foreground instead.

A free-form chain of thought is a bad intermediate object because it is too expressive and too easy to fake. But typed artifacts can be gamed too if the reward structure stays terminal. Consider the trivial example of a model learning to call a calculator tool for simple arithmetic. There is a profound difference between a model that learns “calling the calculator correlates with lower failure rates” and one that is trained to recognize when calculation is actually necessary. The first is just another hedge under sparse reward. The second requires supervision on the intermediate decision itself.

This is where the supervision failure lies. The systems we build hallucinate narratives because we reward narratives. That is not a capabilities ceiling; it is a design choice. The question is not whether we can make the traces look more disciplined. It is what intermediate objects we should force the system to produce so that verification is frequent, mechanical, and difficult to charm.

My claim was unfair because the systems we build can only see narratives. We have not given them anything else to look at. Reasoning is not a construct that is currently supervised; it is merely performed. That is why the solution is not better soliloquies, but meaningful work in some other way.

The next part is what it looks like to build training and evaluation around checkable state transitions rather than around narration.

References

Bean, M., et al. (2025). Measuring what Matters: Construct Validity in Large Language Model Benchmarks. arXiv:2511.04703.

Bounhar, A., et al. (2025). Shorter but not Worse: Frugal Reasoning via Easy Samples as Length Regularization. arXiv:2511.01937.

Ahuja S., et al. (2025). EfficientXLang: Cross-lingual reasoning as an efficiency interface. arXiv:2507.00246.

Hooker, S. (2025). On the slow death of scaling. [Preprint].

Li, Y., et al. (2025). Bilingual reasoning and code-switching in large language models. arXiv:2507.15849.

Silver, D., et al. (2016). Mastering the game of Go with deep neural networks and tree search. Nature, 529, 484–489.

Merrill, W., & Sabharwal, A. (2023). The Expressive Power of Transformers with Chain of Thought. arXiv:2310.07923.

Schaeffer, R., et al. (2023). Are Emergent Abilities of Large Language Models a Mirage? arXiv:2304.15004.

Zhang, B., et al. (2024). Language Specific Knowledge: Do Models Know Better in X than in English? arXiv:2505.14990.

Rosenblatt’s Perceptron work came out in 1958, four years after Turing’s death. Here I am explicitly referring to the Turing test (1950).

Hooker is former Head of Cohere Labs and former Research Scientist at Google DeepMind.